Brain-Computer Interface Generates Music Based on Mood

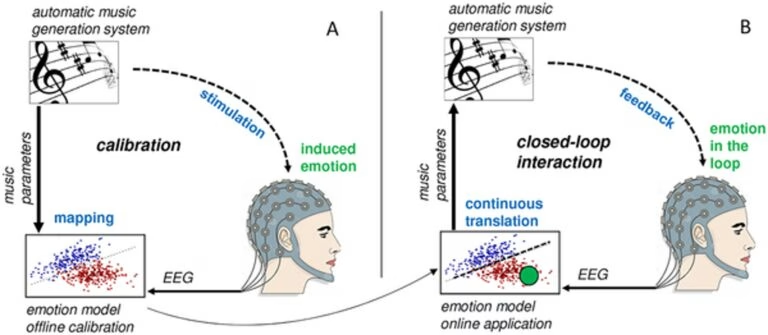

Scientists are developing a brain-computer interface (BCI) that translates a user’s emotional state into music. This innovative technology aims to help users better understand and manage their emotions by providing real-time musical feedback.

As reported in the EveryONE blog, published by PLOS ONE, the device is a collaborative project between Stefan Ehrlich of the Technische Universität München and Kat Agres of the National University of Singapore.

How the Music-Based BCI Works

The device translates brain activity associated with specific emotional states into a continuously adapting musical representation. The ever-changing music generated by the device creates awareness of the user’s emotional state, allowing for emotional regulation. It’s designed to be a tool for interacting with emotions by actively listening and responding to them, according to Ehrlich.

Testing and Future Applications

The BCI has been tested on young adults with depression. Although ease of use varied among participants, Agres reported that all participants successfully self-evoked emotions through recalling specific memories, demonstrating the potential for emotional control using the technology. This is particularly relevant to those who often express their identity through music.

The researchers encountered challenges in creating a continuous and musically cohesive soundscape that adapts seamlessly to fluctuating brain states. However, Agres highlighted the importance of real-time adaptability in reacting to changes in brain signals.

The second round of testing is underway, including healthy participants and individuals with major depressive disorder. The ultimate goal is to aid in the treatment of stroke patients experiencing depression, using the device to provide effective emotional support and regulation.

Read also: AI DJs: A Threat or Tool? Understanding Copyrights & Fair Use

* generate randomized username

- COMMENT_FIRST

- #1 Lord_Nikon [12]

- #2 Void_Reaper [10]

- #3 Cereal_Killer [10]

- #4 Dark_Pulse [9]

- #5 Void_Strike [8]

- #6 Phantom_Phreak [7]

- #7 Data_Drifter [7]

- #8 Zero_Cool [7]