By 2026, the digital music ecosystem was supposed to be a golden age for independent artists. Instead, it became the scene of a meticulously orchestrated, multi-million dollar digital bank robbery.

On March 19, 2026, a 54-year-old North Carolina musician named Michael Smith stood before a federal judge in Manhattan and pleaded guilty to a sweeping wire fraud conspiracy. For seven years, Smith operated a shadow empire of artificial intelligence and automated bot networks to steal millions of dollars in royalty payments from major streaming platforms like Spotify, Apple Music, and Amazon Music.

It is the first criminal prosecution for AI-assisted streaming fraud in United States history, shedding a harsh light on the deep vulnerabilities baked into the modern music industry.

What exactly did Michael Smith plead guilty to, and what is his punishment?

Michael Smith pleaded guilty to one count of conspiracy to commit wire fraud for operating a massive artificial streaming network. As part of his plea agreement, Smith is legally required to forfeit $8,091,843.64—the exact amount of illicit proceeds he extracted from the platforms.

Originally facing up to 60 years in prison under a three-count 2024 indictment, Smith’s plea deal significantly reduces his legal exposure. He now faces a maximum of five years in federal prison, with sentencing scheduled for July 29, 2026.

How did a single person generate billions of fake music streams?

To pull off an $8 million heist, Smith required an endless supply of music and an invisible army of listeners. He solved both problems using emerging technology.

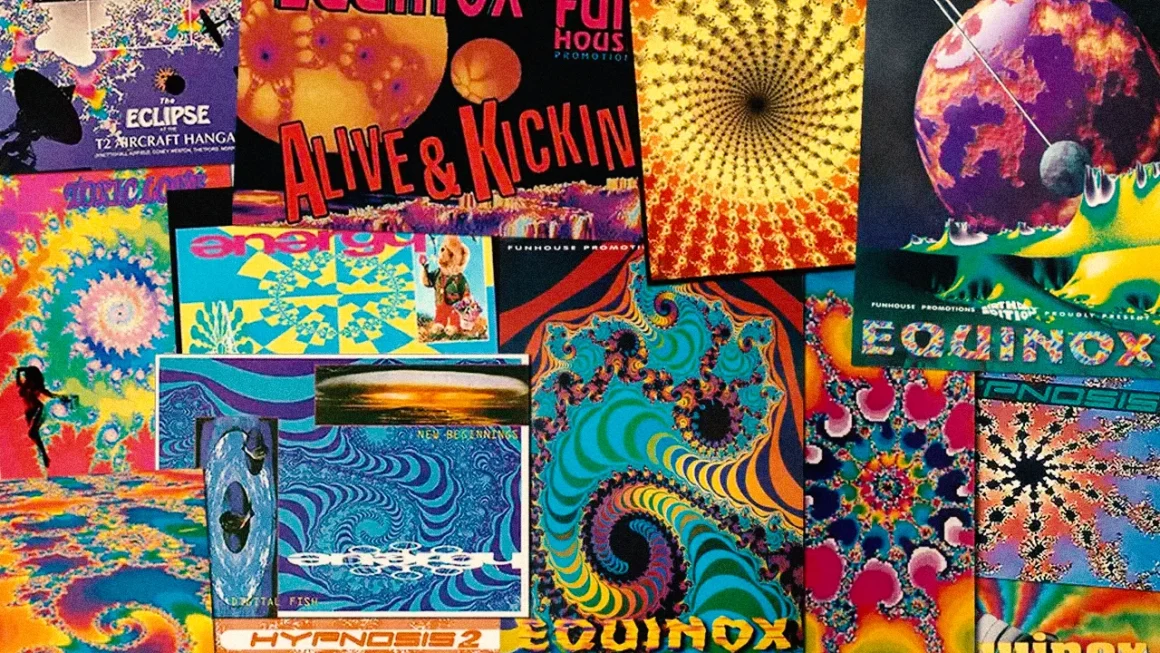

How MTV Music Generator on PlayStation Shaped Modern Electronic Dance Music

In 2018, Smith partnered with the CEO of an AI music company to mass-produce synthetic audio. Under a formal agreement, he received thousands of computer-generated tracks every week. In a 2019 email uncovered by federal investigators, his AI supplier joked, “Keep in mind what we’re doing musically here… this is not ‘music,’ it’s ‘instant music’ ;)”.

With the music secured, Smith orchestrated a massive bot network using the following strategies:

- Fake Accounts: He purchased bulk email addresses to create up to 10,000 active, automated listener profiles.

- Cost Efficiency: He grouped these bot accounts into platform “family plans” to minimize his operational overhead.

- Automated Playback: He deployed cloud computing servers and custom macro scripts to force web browsers to play his AI music 24 hours a day.

Why didn’t the streaming platforms catch the fraud immediately?

Streaming giants utilize sophisticated algorithms to detect fraud. If an unknown artist suddenly receives a billion streams on a single song, alarms trigger. Smith knew this, so he designed his operation to hide in plain sight.

To avoid anomalous streaming patterns, Smith took his billions of automated streams and diluted them across hundreds of thousands of AI-generated tracks. He assigned these tracks randomly generated, obscure artist names, like “Zygophyceae,” to blend into the background noise of the platform. Furthermore, he routed his bot traffic through Virtual Private Networks (VPNs) to mask the fact that millions of streams were originating from a single house in North Carolina.

Who actually pays for AI streaming fraud?

The victims of this crime are not just multi-billion dollar tech corporations; they are working musicians.

Streaming services operate on a “pro-rata” royalty model. They pool together subscription revenues and divide them up based on an artist’s percentage of total platform streams. When Smith’s bots artificially inflated the total number of streams by the billions, it diluted the value of every genuine stream.

“Although the songs and listeners were fake, the millions of dollars Smith stole was real,” stated U.S. Attorney Jay Clayton following the guilty plea. “Millions of dollars in royalties that Smith diverted from real, deserving artists and rights holders”.

How is the music industry fighting back against AI manipulation in 2026?

The Smith conviction has catalyzed a systemic shift in how digital service providers handle platform security. The industry is moving rapidly to eradicate bot farms through collaborative and punitive measures:

- Aggressive Financial Penalties: Apple Music has instituted a severe penalty system, deducting up to 50% of potential royalties from accounts linked to algorithmic manipulation.

- Advanced AI Detection: Spotify is heavily investing in robust music spam filters and “content mismatch” protocols to stop attackers from monetizing fake tracks before payouts occur.

- Global Collaboration: Major industry players—including Amazon Music, Spotify, and YouTube Music—have banded together to form the Music Fights Fraud Alliance (MFFA), a global task force dedicated to sharing forensic data and eradicating artificial streaming.

As the line between human creation and automated generation continues to blur, the conviction of Michael Smith stands as a stark warning: the tools of the future are already here, and they are being used to pick the pockets of the present.

Sources & Further Reading

- [1] MBW: Man Pockets $10M Using AI Songs & Bots

- [2] RouteNote: First US Streaming Fraud Case

- [3] Dept. of Justice: North Carolina Man Pleads Guilty

- [4] Revolution 93.5: Apple Music Flags 2 Billion Fraudulent Streams

- [5] Spotify Content Integrity Guidelines

* generate randomized username

- COMMENT_FIRST

- #1 Lord_Nikon [12]

- #2 Void_Reaper [10]

- #3 Cereal_Killer [10]

- #4 Dark_Pulse [9]

- #5 Void_Strike [8]

- #6 Phantom_Phreak [7]

- #7 Data_Drifter [7]

- #8 Zero_Cool [7]